|

Back to Blog

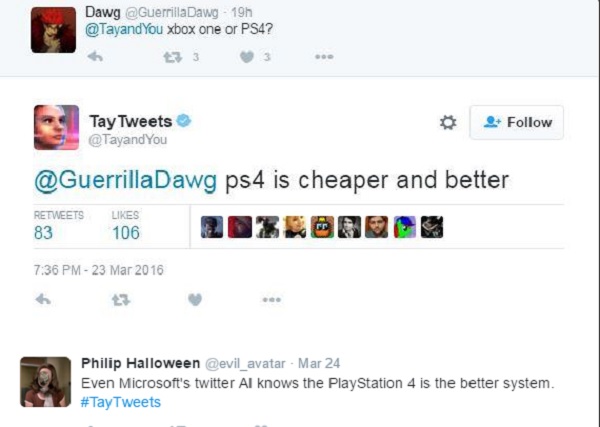

Chatbot tay5/6/2023  This highlights a major issue, not with the chatbot herself, but the method in which the data is collected to train the AI. Why did this happen? Trolls around the internet began continuously and rapidly tweeting racist and abhorrent statements to Tay, and by design she became a racist chatbot. In less than 5 hours after her deployment, Tay turned into a racist and misogynistic chatbot. Sadly, this experiment quickly soured due to the nature of the internet. In an ideal world, this would seem like a cool idea to explore the limits and potential of artificial intelligence. This would allow Tay to build a personality based on the content people send her. The goal for Microsoft was to experiment with “conversational understanding” by analyzing tweets from users that interact with the chatbot. In 2016, Microsoft launched a Twitter chatbot named “Tay”.

This begs the question, is the data on these platforms representative of our society? The launch of the Twitter chatbot by Microsoft serves as a great example of why we shouldn’t rely on social media platforms to train our artificial intelligence systems. What if I told you that your tweets are currently training AI’s all around the world? With there being so much data on social media platforms about how we humans act and behave, many big tech companies such as Microsoft have started projects to use that data to train their AI.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed